The No-Code Rule Builder: Nested Fraud Logic in Minutes

Fraud analysts now compose nested AND/OR detection rules, backtest against real traffic, and ship under maker-checker approval — no code required.

RTD Team

Run-True Decision

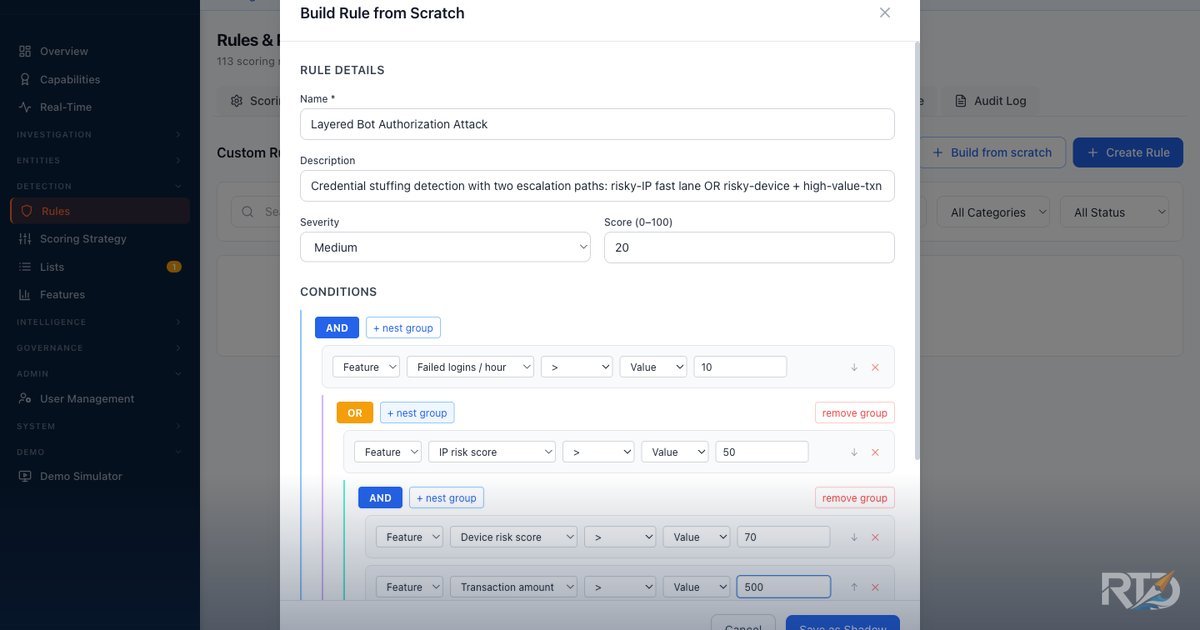

A fraud analyst at a Southeast Asian bank opens a dashboard, picks four features from a dropdown — failed logins per hour, IP risk score, device risk score, transaction amount — clicks + nest group twice, flips an inner junction to OR, and saves. The resulting rule is a three-level decision tree:

(failed logins per hour > 10 AND (IP risk score > 50 OR (device risk score > 70 AND transaction amount > 500)))

Elapsed time: under two minutes. No engineer. No ticket. No redeploy. No SQL. No JSON editing. The same rule, two months ago, would have taken a four-week sprint — scope it, write it, test it, deploy it, measure it. The analyst who actually sees the fraud first would have filed a ticket and waited for the next release.

The No-Code Rule Builder, now live in the Fraud Decision Engine dashboard, closes that gap. Here is what it does — and why that matters for any bank running real-time fraud scoring in 2026.

The fraud-logic ownership gap

Every fraud team has lived this pattern: an analyst spots a new attack signature on Tuesday afternoon, opens a ticket, and waits for the next sprint cycle. By the time the rule ships, the bot network has already moved on. The people closest to the fraud are the furthest from the controls.

The workaround most banks have settled for is a combination of hard-coded rules buried in engineering repos and ML scores with no human-readable explanation. Neither is defensible under the kind of regulator questions that are coming. When the Monetary Authority of Singapore's Technology Risk Management Guidelines ask "why was this transaction flagged?", the answer cannot be "the model said so." It has to be a sentence.

Nested logic, composed in plain English

The builder accepts arbitrary AND/OR nesting. The root can be a two-leaf AND; click + nest group on any child and it becomes a new sub-tree. Toggle the junction per group — the outer tree can be AND while an inner sub-tree is OR. The four-leaf tree above has three levels and mixed junctions; the engine has no upper bound.

As the analyst builds the tree, a readable preview renders beneath it:

(failed logins per hour > 10 AND (IP risk score > 50 OR (device risk score > 70 AND transaction amount > 500)))

That sentence is the rule. It is what the analyst reads. It is what the supervisor reads on approval. It is what the internal auditor reads during the quarterly review. It is what a regulator reads when they ask for the reason a transaction was declined. The same logic is evaluated by the engine at runtime — there is no translation layer, no drift between what the UI shows and what the backend executes. The sentence and the evaluation are the same object, walked by the same recursive evaluator that powers the pre-configured fraud detection templates shipped with the platform.

Pre-save backtest against real traffic

Before saving, the analyst clicks Simulate. The builder replays the proposed condition against the last 24 hours of real events from the tenant's own history and returns, synchronously, the count of historical transactions that would have matched, the match rate, and a sample of events with their request IDs and decision outcomes.

This is the part that quietly changes how rules get written. Without a backtest, every new rule is a gamble — deploy it, wait, see whether it blocks legitimate traffic or misses the attack entirely. With a backtest, the analyst sees the blast radius before saving. A rule that would have matched 4,000 transactions overnight is obviously too aggressive. A rule that matches zero is obviously too narrow. The analyst tunes the thresholds against real evidence, not against a guess.

Because the simulation uses the same evaluator as production, the backtest count is not an approximation — it is the exact number of transactions that rule would have flagged had it been live during that window.

Maker-checker built into the code path

Analysts do not ship rules to production by themselves. Under the maker-checker governance the platform now enforces per-tenant, any rule proposed by one user is queued for approval by a different user before it takes effect. Separation of duties is a standard control for privileged financial systems, but the implementation has two properties that matter in an audit:

- It is enforced by code, not policy. The API returns

202 pending_approvalon save. The approval endpoint explicitly rejects any attempt by the proposing user to self-approve, returning a visible "You proposed this change and cannot review it yourself" error. The control cannot be bypassed by a user with the right permissions flag because it is not a permissions check — it is an identity check on the approval path. - It is observable. Every proposal, approval, and rejection writes to an immutable audit log with cryptographically signed entries. When an auditor asks "who approved this rule, and what did it look like at the moment of approval?", the answer is a single query.

For banks that need to demonstrate separation of duties to a regulator or an internal audit team, this turns a governance question into a technical fact.

On-premise, by design

The builder ships as part of the same on-premise stack the rest of the Fraud Decision Engine uses — Docker Compose, Ansible, no new external dependencies. The rule definitions, the backtest engine, the approval workflow, and the audit log all run inside the bank's perimeter. Nothing about a rule — not the condition tree, not the simulation results, not the approval chain — leaves the deployment.

That constraint is deliberate. Several regional vendors offer no-code rule authoring as a hosted console; a bank that wants to use those consoles has to accept that the specification of every fraud rule it writes is being stored by someone else. For most Southeast Asian banks working under Bank Negara Malaysia's Risk Management in Technology policy, the Monetary Authority of Singapore's data residency expectations, or Bank Indonesia's cloud outsourcing framework, that trade is a non-starter. The builder is designed to match the capability of those hosted consoles without inheriting their deployment model.

What changes next

The harder thing the builder unlocks is cadence. A fraud team that can go from "we just saw a new attack pattern" to "a shadow-mode rule is running against live traffic" in the same afternoon is playing a different game than a team that has to file a ticket. The sprint boundary between analyst intuition and production control dissolves. The rule that catches tomorrow's attack does not have to wait for next month's release.

This post previews what we will be showing at Money 20/20 in Bangkok. The builder is live on the sandbox at fde-sandbox.run-true.com; contact us for credentials if you would like to try it yourself.

Run-True Decision is building a fraud decision engine purpose-built for Southeast Asian banks. Talk to us to learn more.

Explore the Platform

See how Run-True Decision handles real-time fraud scoring, on-premise deployment, and regional compliance for Southeast Asian banks.

View Platform Overview