TLS at the load balancer is not end-to-end: what banks actually need for data-in-transit

Why TLS-terminated-at-the-LB leaks fraud payloads internally, and what end-to-end TLS 1.3 + mTLS actually looks like inside a bank data center.

RTD Team

Run-True Decision

TLS terminated at the load balancer is not end-to-end. Every hop after the LB — app to database, app to cache, engine to alerting — travels in cleartext across shared infrastructure. Most on-prem fraud vendors leave that gap open and rely on network segmentation to cover it. Regulators have decided that's no longer acceptable, and if you are selecting a vendor in 2026, "we support TLS" is the wrong checkbox to look at.

The myth of the trusted internal network

"We already have network segmentation" is the architectural answer most vendors fall back on. It is also the architectural answer most attackers no longer care about.

Inside a bank data center, the realistic threat model is not "someone from the internet reads our wire." It is lateral movement after any single pod or VM is compromised, insider access by a service account that should have been rotated, credential theft from a forgotten CI container, and east-west traffic through shared ingress or service mesh sidecars that were misconfigured two hardening cycles ago. Segmentation slows these down. It does not stop them.

Zero-trust is not a buzzword for the internal network. It is the assumption regulators have moved to because, empirically, perimeter-only controls have kept failing. Post-incident reports from SEA and Hong Kong banks over the last three years read the same way: the attacker got onto one internal host, then walked sideways for weeks through traffic nobody was encrypting because "it was already behind the firewall." The audit question in 2026 is no longer whether you encrypt data at rest. It is whether every hop inside your perimeter is authenticated and encrypted, and whether you can prove it.

What regulators actually require — and when they changed their minds

These rules are not aspirational. They are current audit findings that banks are already being written up on.

PCI DSS 4.0 req 4.2.1 (effective March 2025 for all reporting periods) explicitly expanded the scope of "strong cryptography for cardholder data in transit" to include transmission over any network, including internal and trusted networks. The 3.2.1 phrasing, which many vendors still quote, was narrower and limited to "open, public networks." That caveat is gone. The PCI Security Standards Council's own summary of changes lists this as one of the most significant control expansions in the 4.0 release.

MAS TRM 2021 §11.1.3 and §11.2 require cryptographic controls for data in transit between systems, not only at the perimeter. In the Technology Risk Management Guidelines, the Monetary Authority of Singapore frames this as part of a broader expectation that financial institutions apply defence-in-depth to sensitive data flows — explicitly including flows between application tiers, between services, and between the production estate and any adjacent environment.

HKMA's Secure Tertiary Data Backup (SA-2) guidance and the broader OSPAR expectations for outsourced service providers in Singapore take the same position: segregation is necessary but not sufficient. Data flows carrying sensitive information must be both identity-authenticated and encrypted between components, with cryptographic evidence captured in the audit trail.

None of the three regulators prescribe a specific protocol. All three have stopped accepting "the network is internal" as an answer.

Why TLS-at-the-LB is not end-to-end

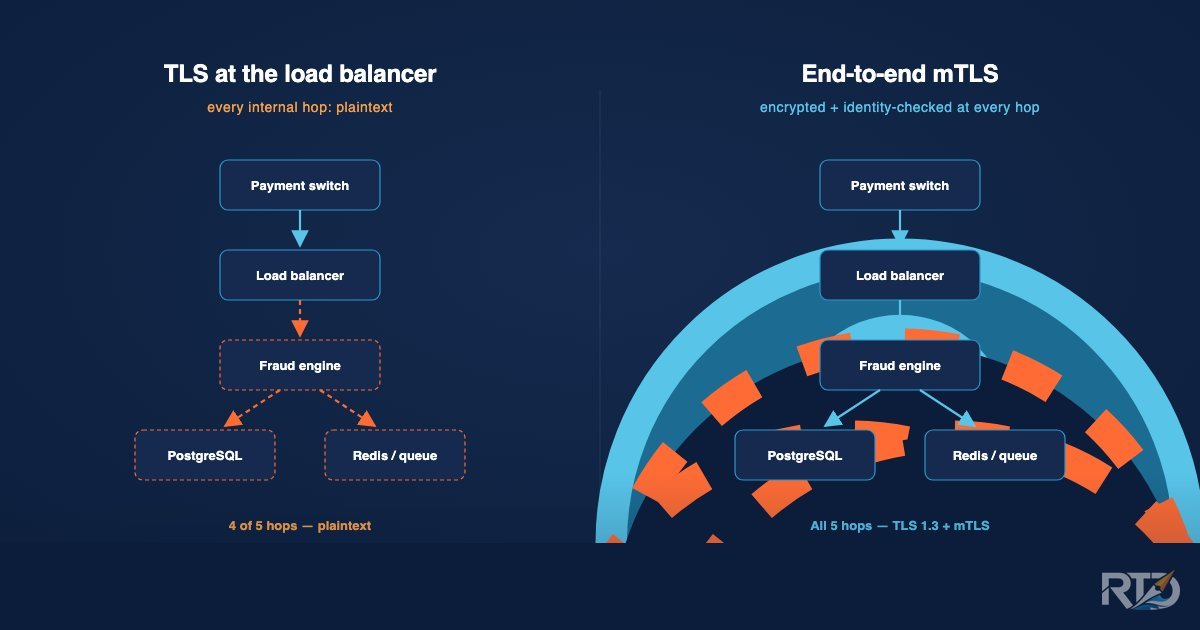

Picture the standard on-prem fraud deployment. A payment switch sends a transaction to the fraud engine's load balancer over TLS. The LB terminates. Inside the perimeter, the app talks to the scoring engine in cleartext, the engine queries PostgreSQL in cleartext, writes to Redis in cleartext, and emits an alert to a queue that the case management service reads in cleartext. Five hops. One is encrypted.

Every plaintext hop is a failure mode. A compromised node, a forgotten tcpdump, a misconfigured service mesh sidecar, a debug flag that logged raw request bodies for three weeks in July — any of these expose the transaction payload. And the payload is the sensitive data: amount, beneficiary account number, device fingerprint, source IP, identity document reference. For fraud detection, the payload is not incidental — it is the whole point.

This is worse than the cardholder-data case PCI originally targeted. A payment authorization message may contain a primary account number, transaction amount, merchant category, and enough device telemetry to re-identify the customer. If any of those five plaintext hops leak, the attacker has a live feed of the bank's real-time transaction stream — which is more valuable than any single card dump because it does not expire when the card is reissued.

mTLS is about identity, not just encryption

One-way TLS proves the server is who it claims to be. Inside a data center, that is the wrong question. The right question is: is this connection actually coming from the fraud engine, or from something pretending to be the fraud engine?

Mutual TLS with per-service certificates turns every connection into a cryptographic identity check. A stolen database password alone is no longer enough to read the fraud engine's writes, because without the corresponding client certificate the connection never establishes. This is the same attack-class closure that SPIFFE/SVID patterns provide in service-mesh architectures — except mTLS with per-service certs does not require running a full mesh alongside the on-prem fraud deployment. For most banks, a mesh is a bigger operational commitment than the security gain justifies; per-service mTLS delivers the identity guarantee without the sidecar tax.

The operational consequence is simple: a compromised service account password becomes noisy rather than catastrophic. The attacker has to also obtain and import a valid, non-revoked client certificate issued to that specific service on that specific host. That is a different, louder attack path — and one the bank's PKI monitoring is much more likely to notice.

What "done properly" looks like operationally

These are the bits that come up in the second POC meeting, not the first. A cryptographically perfect design that cannot be operated is worse than a slightly weaker design that the bank's platform team actually runs correctly.

Cert rotation without downtime. Hot-reload the certificate material in-process, not restart the service. A fraud engine that has to restart to pick up a new cert is a fraud engine that gets rotated less often than it should, which means expired certs in production and 3am pages that nobody wants to own.

Revocation with a bounded window. Either CRL distribution with a publication frequency the bank can live with, or OCSP with a validated stapling path. "We support both" is a fine answer only if the operational runbook commits to one and measures the revoke-to-effective window.

Two deployment modes. Banks are not all at the same PKI maturity. Mode A: the vendor ships a self-contained CA with the product, suitable for banks that do not yet run internal PKI at scale. Mode B: the bank supplies its own PKI; the vendor validates and distributes certificates issued by the bank's CA but never holds the root. A vendor that only offers Mode A is selling into Tier 3. A vendor that only offers Mode B is selling into Tier 1. Serious on-prem fraud deployment needs both.

Key custody. Private keys never leave the host they are used on. No "please email us your cert bundle." A vendor that asks for private-key material by email has told you everything you need to know about the rest of their security posture.

Outage recovery. When a cert expires at 3am, what happens? A fraud engine that fails closed (rejects traffic rather than passing it cleartext) is the defensible default. A fraud engine that fails open is a regulatory incident waiting to be written up. The break-glass path should preserve the audit trail — "we turned off mTLS for 20 minutes at 3:14am and here is the signed record of who authorized it and why."

Algorithm choices. TLS 1.3 only. EC P-256 for leaf certificates (fast handshakes, cheap rotation). EC P-384 for the CA (longer-lived, more headroom). No RSA-1024 anywhere. No TLS 1.2 fallback in the supported matrix — fallback paths are what attackers actually target.

What RTD ships

The Run-True Decision Fraud Decision Engine ships end-to-end TLS 1.3 at every internal hop as part of the on-premise deployment. The flow is: payment switch → nginx (TLS 1.3) → scoring engine (TLS 1.3) → PostgreSQL (sslmode=verify-full) → Redis (TLS scheme-driven) → case management and alerting (TLS 1.3 with mTLS). There is no cleartext hop inside the supported deployment matrix.

mTLS runs between every internal component, not only at the edge, using per-service leaf certificates signed by either the bank's internal CA or a vendor-supplied CA bundled with the product. Banks pick the mode that matches their PKI maturity. The flag to enable full-mesh mTLS is opt-in, so pre-production and lab configurations are not forced into it on day one — but the default deployment for production is secure-by-design, and there is no cleartext fallback path in the supported matrix.

For evaluators and InfoSec reviewers: the downloadable TLS Audit Pack on the FDE on-premise page covers posture summary, audit query catalog, and certificate lifecycle runbook — enough to answer most second-POC-meeting questions without needing a scheduled call. It is ungated.

The specific question worth asking your fraud or risk vendor, before you get into the commercial conversation: show me the connection between your engine and your database. Is it cleartext? If the answer starts with "well, inside our perimeter…" — that is the answer.

Run-True Decision is building a fraud decision engine purpose-built for Southeast Asian banks. Talk to us to learn more.

Explore the Platform

See how Run-True Decision handles real-time fraud scoring, on-premise deployment, and regional compliance for Southeast Asian banks.

View Platform Overview